Jul 1, 2025

Sony at CVPR 2025: advancing computer vision technology

Each year at the IEEE/CVF Conference on Computer Vision and Pattern Recognition, thousands of researchers gather to share new work about the next generation of computer vision and AI algorithms, products, and intelligence systems. As a Platinum sponsor at CVPR 2025 in Nashville, Tennesse, Sony researchers contributed 18 papers and presented at 6 conference workshops.

At the exhibition booth, research teams from Sony Group Corporation, Sony Semiconductor Solutions (SSS), Sony Interactive Entertainment (SIE), and Sony AI showcased technical demos and presentations about the essential role of computer vision in the future of creation.

Visit the Sony CVPR 2025 site for a comprehensive overview of works presented at this year’s conference. Find a snapshot of CVPR activities from Sony researchers below.

SIE: interactive robots and neural wrappers

Sony Interactive Entertainment (SIE) received a “Highlighted Paper” distinction for their paper titled“Generating 6DoF Object Manipulation Trajectories from Action Description in Egocentric Vision.” This paper focuses on training robots to manipulate tools or objects in common environments. Everyday environments, such as kitchens, are full of diverse tools and appliances of all different shapes and sizes. Learning to use these tools correctly is particularly challenging for interactive robots. This paper proposes a framework that leverages large-scale ego- and exo-centric video datasets to extract diverse manipulation trajectories at scale. From these extracted trajectories, the team developed generation models based on visual and point cloud-based language models. The research team validated these models and established a training dataset and baseline models for the novel task of generating six degrees of freedom (6DoF) manipulation trajectories.

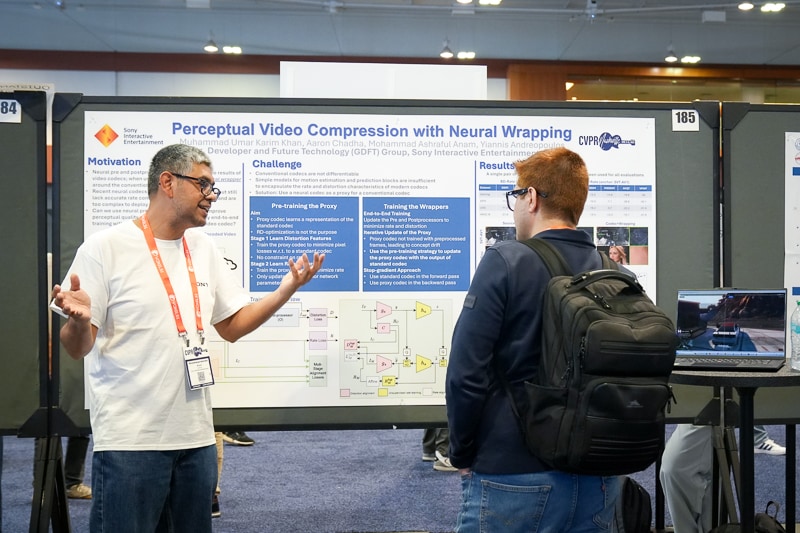

In another accepted paper, "Perceptual Video Compression with Neural Wrapping,” SIE researchers demonstrated the significant potential of neural wrapper components to enhance standards-based video coding. The team introduced novel techniques to jointly optimize neural pre- and post-processors with a conventional codec in the middle. This approach improves efficiency and quality, achieving significant rate savings and better perceptual scores compared to modern video codecs.

SSS: advancements in imaging, sensing, and perception

Researchers from Sony Semiconductor Solutions (SSS) shared new work about how sensors can enhance and expand the field of imaging, as well as evolve how we interact with entertainment experiences.

The team presented advancements in Neural Radiance Fields (NeRF) in a paper titled, “MultimodalStudio: A Heterogeneous Sensor Dataset and Framework for Neural Rendering across Multiple Imaging Modalities.” The team’s solution, MultimodalStudio (MMS), expands the capability of NeRFs by incorporating a dataset (MMS-DATA) with 32 scenes captured across five imaging modalities: RGB, monochrome, near-infrared, polarization, and multispectral. It also includes a framework (MMS-FW) to handle data from multiple imaging devices. Experiments show that combining these modalities creates better renderings than using single modalities alone. This dataset and framework are publicly available here to support multimodal rendering research.

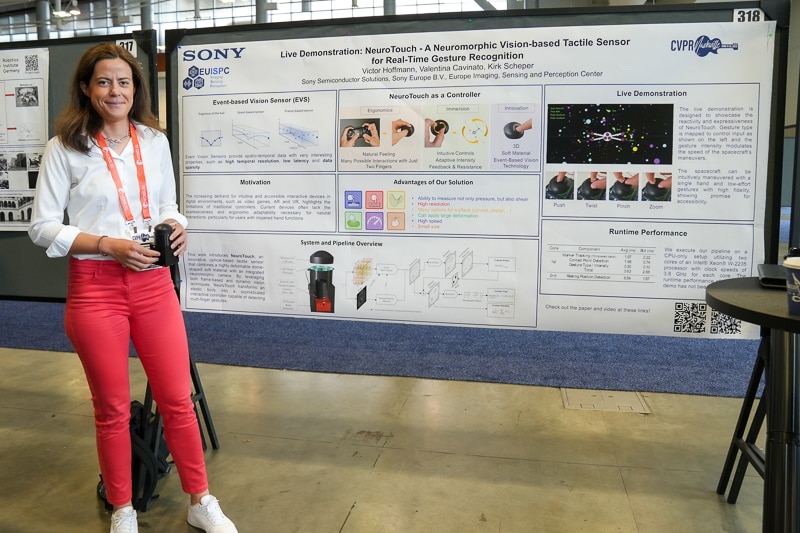

Live at the Sony booth, SSS demoed NeuroTouch - A Neuromorphic Vision-based Tactile Sensor for Real-Time Gesture Recognition. NeuroTouch uses a soft, dome-shaped material and an integrated neuromorphic camera to detect multi-finger gestures. It tracks markers on the surface with event-based methods, detects contact points, and classifies movements into five gesture types with varying intensity. In the demo, the team showcased the NeuroTouch sensor as an input paradigm for gaming, showing its potential for entertainment applications.

SSS members also demonstrated Multispectral Sensing Solution composed of Sony’s 1st Multispectral Image Sensor and its dedicated signal processing software such as spectrum reconstruction, spectrum-based classification. The sensor has a multispectral filter formed on the photodiode of each pixel, making it possible to capture multiple wavelengths of light simultaneously. By aim of the signal processing, up to 41-band images can be reconstructed to recover the spectrum of a scene. Because the solution can capture the composition of different substances as well as subtle color differences that are imperceptible to the human eye, they can be used in applications like material sorting, contaminant detection inspection, and quality management. Learn more about how this core tech can advance the agriculture sector and more on the SSS website.

Sony AI: the future of creation and sensing

Researchers from Sony AI presented 12 papers across the main conference and workshops. Visit the Sony AI blog for a comprehensive overview of this work, and highlights from the booth below.

In their paper “Noise Modeling in One Hour: Minimizing Preparation Efforts for Self-supervised Low-Light RAW Image Denoising,” Sony AI researchers introduce a noise synthesis pipeline that drastically cuts denoising time. The central challenge in this work is to enhance noise modeling efficiency while maintaining high quality output. By minimizing costly setup and calibration, this work demonstrates how AI image enhancement tools might efficiently scale outside of the lab.

In an accepted paper titled ”Stretching Each Dollar: Diffusion Training from Scratch on a Micro-Budget,” Sony AI researchers present ”MicroDiT,” a text-to-image diffusion transformer that demonstrates a more efficient method for training diffusion models. The team proposes a strategy called ”deferred patch masking,” which pre-processes image patches using a ”patch mixer.” With this method, the model retains semantic context on a lower budget. This solution demonstrates the potential to democratize access to high quality diffusion models with more efficient generative modeling.

“ReRAW: RGB-to-RAW Image Reconstruction via Stratified Sampling for Efficient Object Detection on the Edge“ demonstrates a new pipeline for training models directly on RAW, data-rich images. ReRAW enables the creation of high quality synthetic RAW images for training compact object detection models. Operating directly on RAW eliminates the need for ISPs or extra domain adapters. This approach boosts the performance of computer vision models in mobile devices and embedded systems.

In a paper titled, “MMAudio: Taming Multimodal Joint Training for High-Quality Video-to-Audio Synthesis” the Sony AI team proposes a new model synthesizing high-quality, context-aware sounds with video. MMAudio enhances semantic alignment, temporal precision, and audio quality by jointly training on text, audio, and video data, rather than just video-audio pairs or fine-tuned text-to-audio models. This work addresses the core challenge of generating sound from visual content. MMAudio opens up new possibilities for content creation across the entertainment landscape.

Sony team members enjoyed connecting with the computer vision research community in Nashville. To learn more about career opportunities across Sony’s R&D teams, visit our Careers page.